C07 | Optimization for Dynamic Mixed Reality User Interfaces

In this project we aim to dynamically adapt the user interface during interaction with Cross-Reality (XR) applications through head-mounted displays (HMDs), to improve usability and ensure the users safety and comfort.

XR refers to any systems that immerse the user in an interactive virtual or virtually augmented environment, such as Virtual Reality (VR) or Augmented Reality (AR). The virtual content is often placed in the space around the user’s body and can be directly manipulated with hands or controllers. This shift of virtual content from the 2D space of the computer screen to the 3D environment brings along a myriad of new challenges in user interface (UI) and interaction design, due to the complex and dynamic context of interaction. Contexts to consider include the user, the physical environment, and the activity. For example, an AR application presenting a virtual button in mid-air in front of the user may occlude their view of their conversation partner, lead them to knock over the glass of water on the table when trying to reach it, and cause muscle fatigue if interacted with continuously.

We aim to address these challenges by optimizing 3D UIs with regards to the placement of information and interactive elements, as well as the employed interaction techniques and feedback, depending on the context of interaction.

Research Questions

What UI characteristics can and should be adapted and how (e.g., placement, appearance, interaction technique, user representation, immersion, physicality)?

What are meaningful characteristics of the interaction context and how can they be quantified to facilitate real-time computation of optimization functions?

How can we handle conflicting optimization objectives and make their resolution apparent to the designer or user?

How much customizability does the user need during interaction, ranging from pre-defined modes to a fully adaptive system that learns over time?

How can we adapt the UI to effectively support collaboration and social interaction between multiple users?

What are meaningful and measurable success criteria for validating optimization results in user studies?

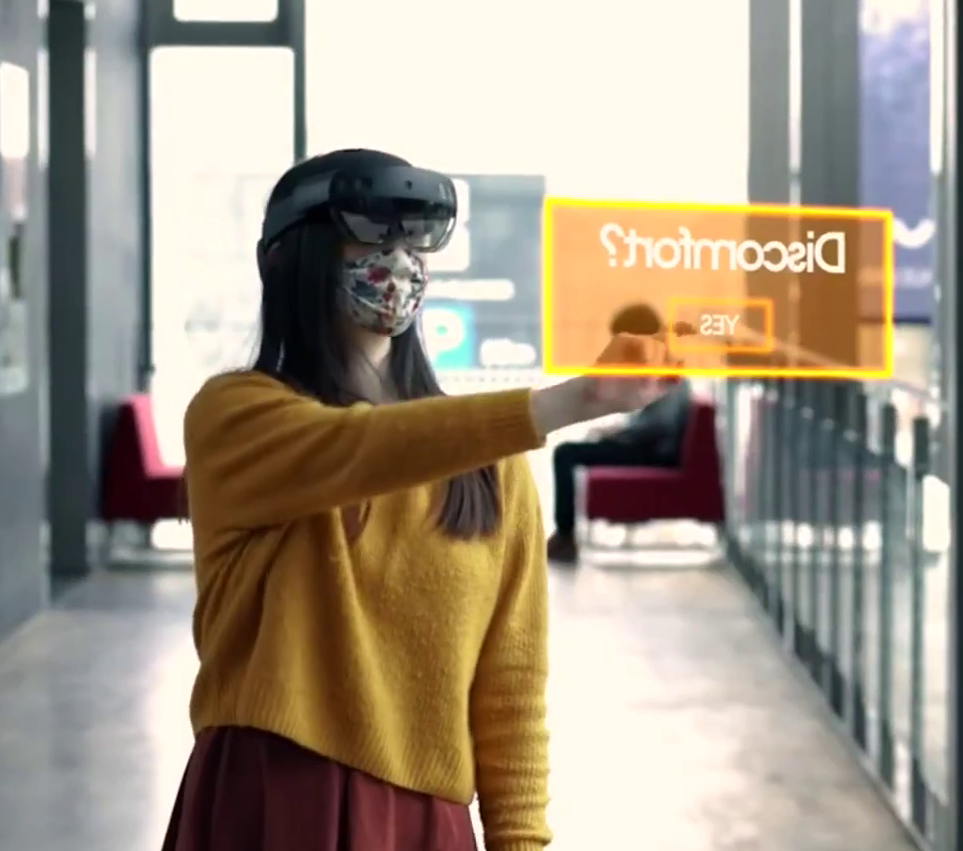

Fig. 1: Common mid-air interaction with head-mounted Augmented Reality systems

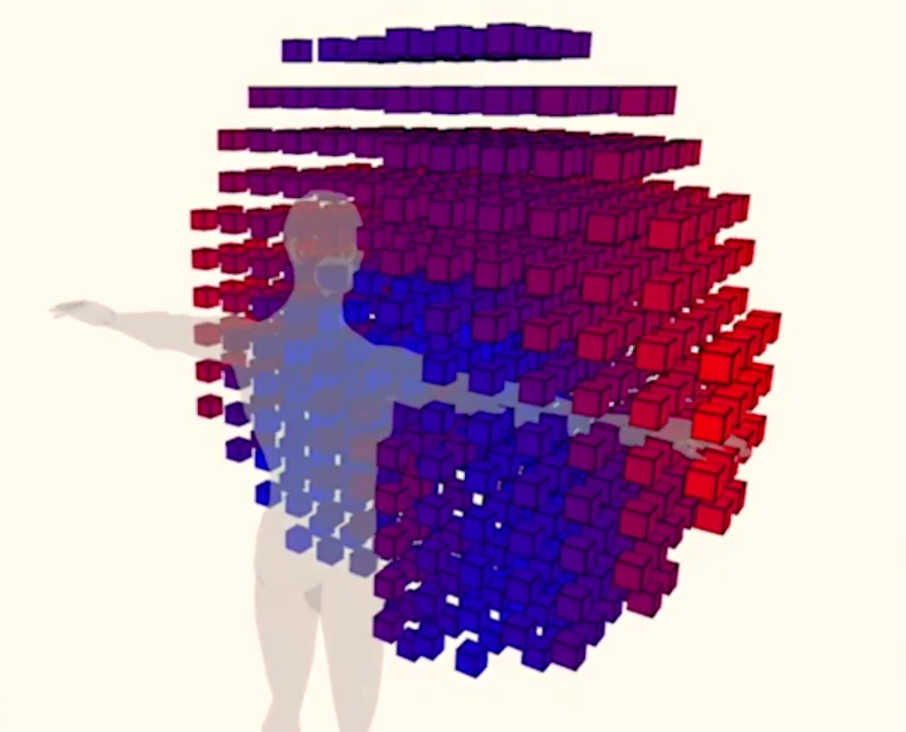

Fig. 2: Illustration of ergonomic cost in the user’s interaction space

Publications

- S. Hubenschmid et al., “Hybrid User Interfaces: Past, Present, and Future of Complementary Cross-Device Interaction in Mixed Reality,” IEEE Transactions on Visualization and Computer Graphics, pp. 1–20, Apr. 2026, doi: 10.1109/tvcg.2026.3683941.

- J. Wieland, D. Immanuel Fink, A. V. Reinschluessel, J. Häßler, T. Feuchtner, and H. Reiterer, “Using Digital Twins to Design and Evaluate Interactive Exhibitions: A Case Study with Handheld AR,” in Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems, New York, NY, USA: ACM, Apr. 2026, pp. 1–26. doi: 10.1145/3772318.3790852.

- J. Wieland, S. Abed, A. V. Reinschluessel, J. Zagermann, H. Reiterer, and T. Feuchtner, “Extending the Fishing Reel - Improving Multiple Object Selection in VR Using Transparency and a Resizable Pointer,” in Proceedings of the 1st International Conference on Human-Computer Interaction in the Alps, New York, NY, USA: ACM, May 2026, pp. 1–7. doi: 10.1145/3780045.3780046.

- S. Hubenschmid et al., “SpatialMouse: A Hybrid Pointing Device for Seamless Interaction Across 2D and 3D Spaces,” in Proceedings of the 2025 31st ACM Symposium on Virtual Reality Software and Technology, New York, NY, USA: ACM, Nov. 2025, pp. 1–13. doi: 10.1145/3756884.3766047.

- S. Hubenschmid et al., “Revisiting Hybrid Input Devices for Immersive Analytics,” in Human Factors in Immersive Analytics Workshop at IEEE VIS 2025, Vienna, Nov. 2025.

- A. V. Reinschluessel et al., “Bridging Realities in a Heartbeat : How Integrating Heartbeat Signals Supports Collaboration in Mixed Reality,” in CHI Workshop on “Scaling Distributed Collaboration in Mixed Reality”, 2025. [Online]. Available: http://nbn-resolving.de/urn:nbn:de:bsz:352-2-c76xaw7uu3xa8

- Y. Cherif, C. Sayffaerth, F. Chiossi, and L. Schütz, “Stress by Design? The Influence of Online Exam Interfaces on Student Anxiety,” in Proceedings of the Mensch und Computer 2025, New York, NY, USA: ACM, Aug. 2025, pp. 705–710. doi: 10.1145/3743049.3748538.

- D. I. Fink, M. Skowronski, J. Zagermann, A. V. Reinschluessel, H. Reiterer, and T. Feuchtner, “There Is More to Avatars Than Visuals: Investigating Combinations of Visual and Auditory User Representations for Remote Collaboration in Augmented Reality,” in Proceedings of the ACM on Human-Computer Interaction, Association for Computing Machinery (ACM), 2024, pp. 540–568. doi: 10.1145/3698148.

- J. Wieland et al., “Investigating the Potential of Haptic Props for 3D Object Manipulation in Handheld AR,” IEEE Transactions on Visualization and Computer Graphics, vol. 31, Art. no. 9, 2024, doi: 10.1109/tvcg.2024.3495021.

- A. Zaky, J. Zagermann, H. Reiterer, and T. Feuchtner, “Opportunities and Challenges of Hybrid User Interfaces for Optimization of Mixed Reality Interfaces,” in 2023 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct), 2023, pp. 215–219. [Online]. Available: https://ieeexplore.ieee.org/document/10322176

- J. Zagermann, S. Hubenschmid, D. I. Fink, J. Wieland, H. Reiterer, and T. Feuchtner, “Challenges and Opportunities for Collaborative Immersive Analytics with Hybrid User Interfaces,” in 2023 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct), Los Alamitos, CA, USA: IEEE Computer Society, Oct. 2023, pp. 191–195. doi: 10.1109/ISMAR-Adjunct60411.2023.00044.

- A. V. Reinschluessel and J. Zagermann, “Exploring Hybrid User Interfaces for Surgery Planning,” in 2023 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct), 2023, pp. 208–210. [Online]. Available: https://ieeexplore.ieee.org/abstract/document/10322244

- S. Hubenschmid, J. Zagermann, D. Leicht, H. Reiterer, and T. Feuchtner, “ARound the Smartphone: Investigating the Effects of Virtually-Extended Display Size on Spatial Memory,” in Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems (CHI ’23), New York, NY, USA: ACM, 2023. [Online]. Available: https://kops.uni-konstanz.de/server/api/core/bitstreams/6eecac2f-666f-4399-bec3-d8e607331164/content

- J. Zagermann et al., “Complementary Interfaces for Visual Computing,” it - Information Technology, vol. 64, pp. 145–154, 2022, doi: 10.1515/itit-2022-0031.

- F. Chiossi et al., “Adapting visualizations and interfaces to the user,” it - Information Technology, vol. 64, pp. 133–143, 2022, doi: 10.1515/itit-2022-0035.

FOR SCIENTISTS

Projects

People

Publications

Graduate School

Equal Opportunity

FOR PUPILS

PRESS AND MEDIA

© SFB-TRR 161 | Quantitative Methods for Visual Computing | 2019.