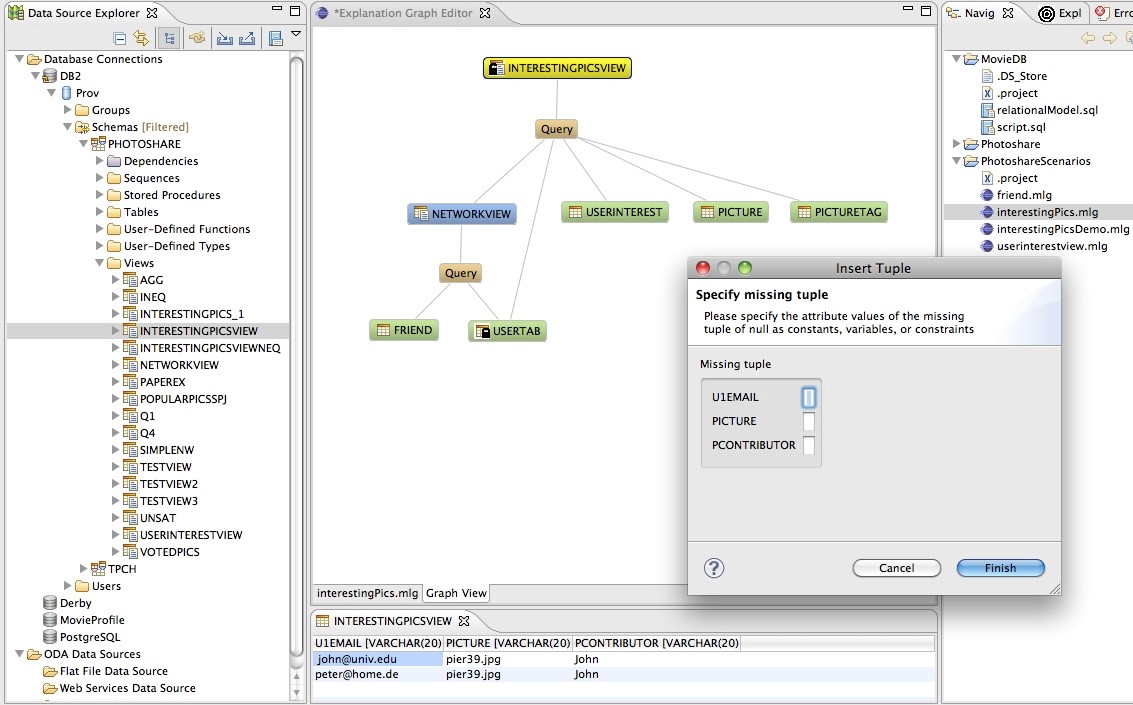

D03 | Visual Exploration and Analysis of Provenance Data

To analyze or debug complex data processing applications, or to ensure their understandability and repeatability, provenance techniques are increasingly being deployed, resulting in large volumes and a wide variety of provenance data. The long-term goal of this project is to leverage visualization techniques to efficiently and effectively explore provenance data. In the first funding period, we will focus on properly visualizing the full provenance data generated for one run of a data-processing pipeline. This involves both quantifiably identifying suited visualizations for various provenance types and ensuring user-friendly provenance data generation and visualization in existing data processing pipelines.

Research Questions

What are suitable visualization techniques for different settings defined by varying types of provenance and applications?

Which metrics can quantitatively assess provenance data visualization quality?

How can such metrics support tuning processes generating and managing provenance data?

Which types of provenance are best suited to achieve the goals of reproducibility and predictability for selected visual computing processes?

Publications

- H. Ben Lahmar and M. Herschel, “Collaborative filtering over evolution provenance data for interactive visual data exploration,” Information Systems, vol. 95, p. 101620, 2021, doi: 10.1016/j.is.2020.101620.

- V. Bruder et al., “Volume-Based Large Dynamic Graph Analysis Supported by Evolution Provenance,” Multimedia Tools and Applications, vol. 78, Art. no. 23, 2019, doi: 10.1007/s11042-019-07878-6.

- H. Ben Lahmar, M. Herschel, M. Blumenschein, and D. A. Keim, “Provenance-based Visual Data Exploration with EVLIN,” in Proceedings of the Conference on Extending Database Technology (EDBT), 2018, pp. 686–689. doi: 10.5441/002/edbt.2018.85.

- C. Schulz, A. Zeyfang, M. van Garderen, H. Ben Lahmar, M. Herschel, and D. Weiskopf, “Simultaneous Visual Analysis of Multiple Software Hierarchies,” in Proceedings of the IEEE Working Conference on Software Visualization (VISSOFT), IEEE, 2018, pp. 87–95. [Online]. Available: https://ieeexplore.ieee.org/document/8530134/

- S. Oppold and M. Herschel, “Provenance for Entity Resolution,” in Provenance and Annotation of Data and Processes. IPAW 2018. Lecture Notes in Computer Science, vol. 11017, K. Belhajjame, A. Gehani, and P. Alper, Eds., Springer International Publishing, 2018, pp. 226–230. doi: 10.1007/978-3-319-98379-0_25.

- M. Herschel, R. Diestelkämper, and H. Ben Lahmar, “A Survey on Provenance - What for? What form? What from?,” The VLDB Journal, vol. 26, pp. 881–906, 2017, doi: 10.1007/s00778-017-0486-1.

- R. Diestelkämper, M. Herschel, and P. Jadhav, “Provenance in DISC Systems: Reducing Space Overhead at Runtime,” in Proceedings of the USENIX Conference on Theory and Practice of Provenance (TAPP), 2017, pp. 1–13. doi: 10.5555/3183865.3183883.

- M. A. Baazizi, H. Ben Lahmar, D. Colazzo, G. Ghelli, and C. Sartiani, “Schema Inference for Massive JSON Datasets,” in Proceedings of the Conference on Extending Database Technology (EDBT), 2017, pp. 222–233. doi: 10.5441/002/edbt.2017.21.

- H. Ben Lahmar and M. Herschel, “Provenance-based Recommendations for Visual Data Exploration,” in Proceedings of the USENIX Conference on Theory and Practice of Provenance (TAPP), 2017, pp. 1–7.

- M. Herschel and M. Hlawatsch, “Provenance: On and Behind the Screens,” in Proceedings of the ACM International Conference on the Management of Data (SIGMOD), F. Özcan, G. Koutrika, and S. Madden, Eds., ACM, 2016, pp. 2213–2217. doi: 10.1145/2882903.2912568.

Project Group A

Models and Measures

Completed

Project Group B

Adaptive Algorithms

Completed

Project Group C

Interaction

Completed

Project Group D

Applications

Completed

FOR SCIENTISTS

Projects

People

Publications

Graduate School

Equal Opportunity

FOR PUPILS

PRESS AND MEDIA

© SFB-TRR 161 | Quantitative Methods for Visual Computing | 2019.